Back

Bagging Ensemble Selection - a new ensemble learning strategy

When to use Bagging Ensemble Selection?

- accuracy is critical (many domains)

- don’t need to understand the model (some domains)

- training cost is NOT critical (access to low cost clusters)

- need to optimize a special error metric or a combination of metrics

- Potentially, non-stationary time series because BES untilises the out-of-bag sample, and has 'built-in' feature selection

- data mining competitions :-)

We have two papers published on the Bagging Ensemble Selection algorithm for both Classification and Regression problems.

- Quan Sun and Bernhard Pfahringer. Bagging Ensemble Selection for Regression. In Proceedings of the 25th Australasian Joint Conference on Artificial Intelligence (AI'12), Sydney, Australia, pages 695-706. Springer, 2012. (draft | presentation pdf)

- Quan Sun and Bernhard Pfahringer. Bagging Ensemble Selection. In Proceedings of the 24th Australasian Joint Conference on Artificial Intelligence (AI'11), Perth, Australia, pages 251-260. Springer, 2011. (draft | presentation pdf)

The BESTrees algorithm

A tree-ensemble-based BES implementation, which is relatively fast and supports multi-cores (using default parameters and 4 cores, the algorithm took 1 min to train on a dataset with 100,000 instances and 100 attributes on an AMD 2.8G PC)

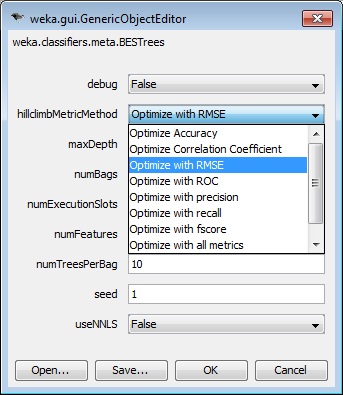

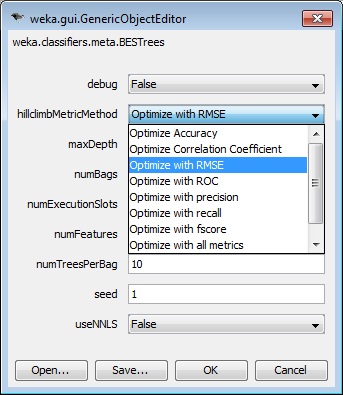

The user can choose a target evaluation metric (out of 9) to optimize. For regression problems, the algorithm supports the NNLS ensemble pruning strategy.

NNLS (Non-negative least-squares) WEKA source code NNLS.java

BESTrees can be downloaded from: BESTrees.zip

How to install BESTrees? (for Java 1.6+ and WEKA 3.7.7+)

Download WEKA (I have tested WEKA 3.7.7, which works fine) for your system from: http://www.cs.waikato.ac.nz/ml/weka/index.html

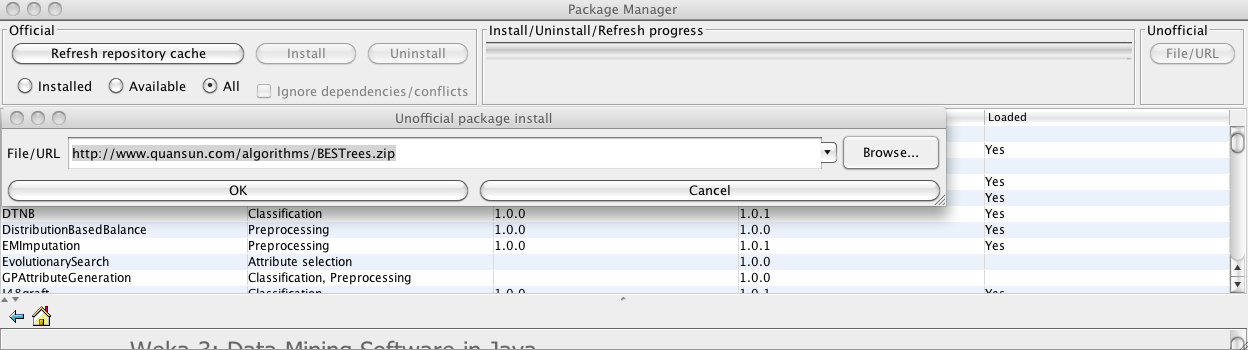

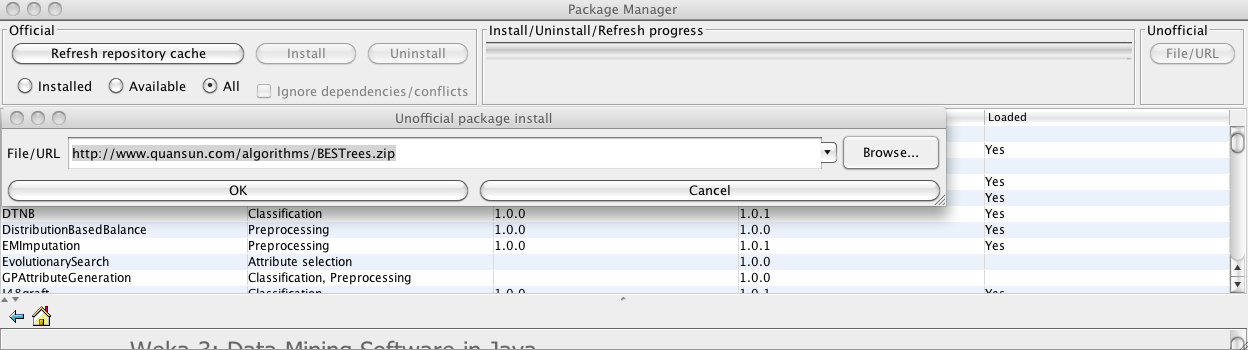

Open the WEKA package manager, click the "File/URL" button, and type:http://www.quansun.com/algorithms/BESTrees.zip

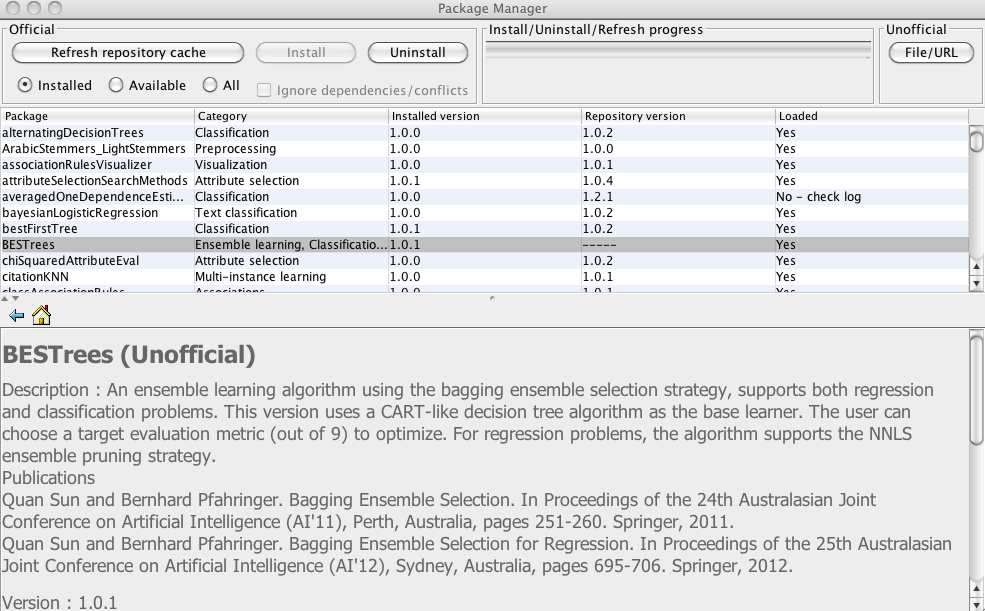

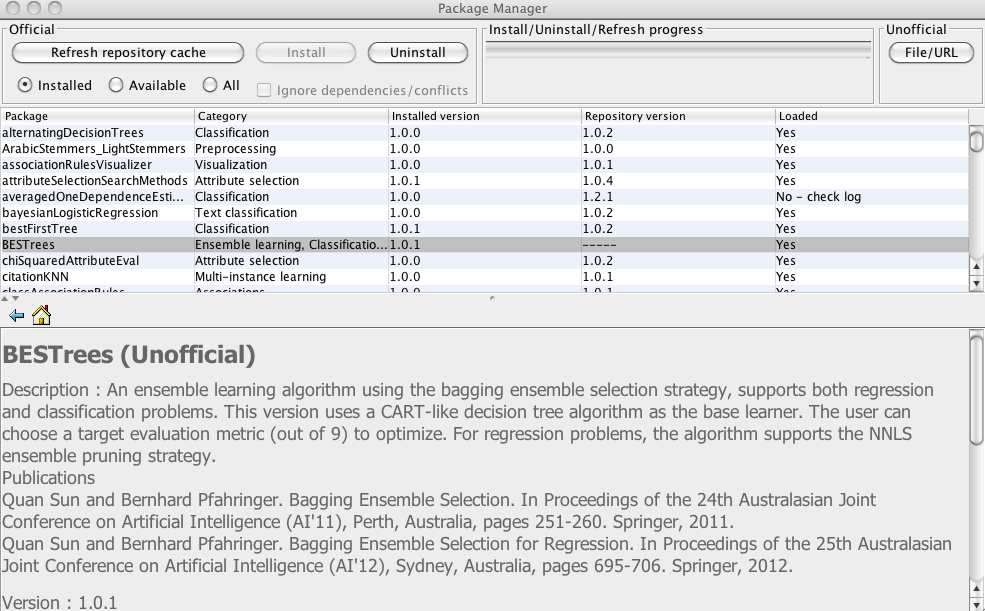

After installation you will see the BESTrees algorithm displayed on the list

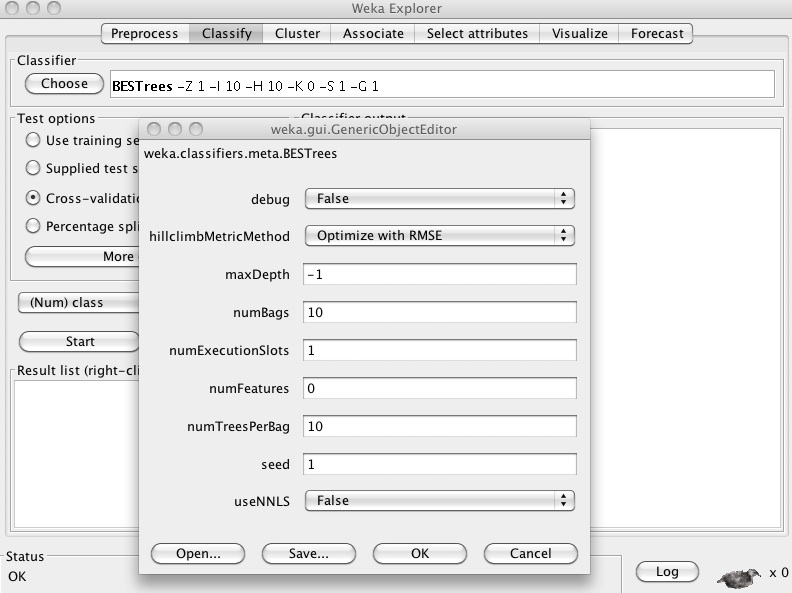

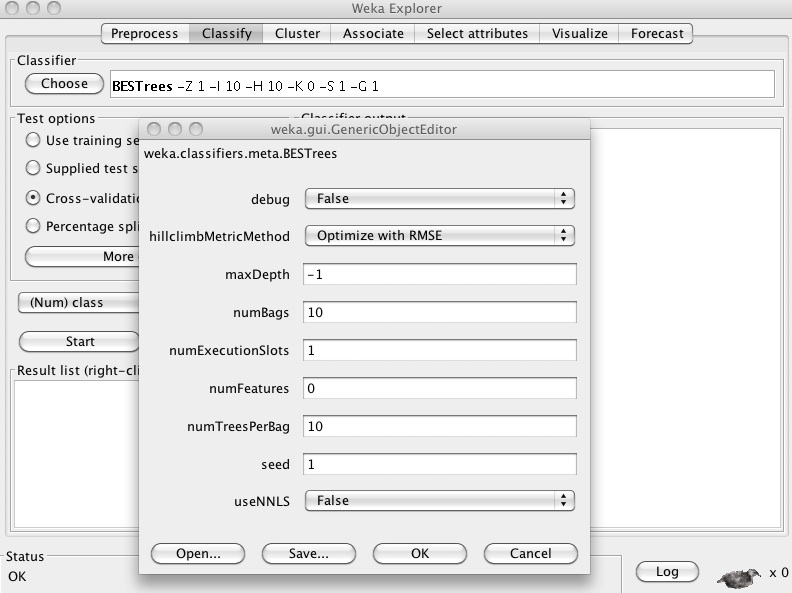

Use BESTrees in WEKA

BESTrees supports 9 metrics.

FAQ

What should I do if the default parameter setting doesn't work well on my dataset?

Try to set the 'numFeatures' option to the total number of features of your dataset, or set it to a very large number, e.g., 99999, the base learner (REPTree) of BESTrees will use all features automatically.

Try to increase the values for 'numBags' and 'numTreesPerBag', e.g., 30 and 100, respectively.

Any questions?

Please feel free to contact me if you have any questions:

quan.sun.nz@gmail.com

Back

Last updated 28/01/2013